The PiE (Puzzle-solving in Ethics) Model

Ethics is about answering one crucial question: “What is the right thing to do?”

The PiE (puzzle-solving in ethics) Model focuses on this core question in a systematic manner, integrating ethical puzzle-solving into the whole process of innovation to ensure that ethical issues are handled in the right way and at the right time.

In 2018, we developed and started implementing the PiE Model to steer the practice and discourse on AI ethics from “ethics policing” with ethics reviews and approvals to “ethics integration” with collaborative and dynamic ethical puzzle-solving.

▶︎

What is Puzzle-solving in Ethics?

What is Puzzle-solving in Ethics?

In dealing with ethics, many get lost in rules, regulations, and approvals. In reality, ethical questions are like puzzles: We face complex situations where individual lives, societies, and even the world could be in a better state if we make the right decision. And often, we do not know what is the right decision – we have to work it out.

Puzzle-solving in Ethics (PiE) is a comprehensive, systematic, analytic, critical, and innovative approach to ethical questions. It explicates the ethical puzzles and combines ethical reasoning with systems thinking and design thinking to find solutions.

The PiE Model goes beyond the common approaches like problem-solving by not focusing on “problems” but rather giving equal importance both to ethical issues and risks and to ethical goals and opportunities. As an integrated ethics model, it goes beyond principles, ethics codes, and ethics reviews in its dynamic, collaborative, and innovative approach.

The PiE Model has its foundation on applied ethics utilizing its tools and literature as developed in moral and political philosophy. On this foundation, the Model builds multidisciplinary collaborations bringing together ethics experts with technology and domain experts to achieve its goals.

▼

How does the PiE Model work?

Innovation happens when technology breaks into a domain—when wearables are used for health purposes, when internet becomes a part of telecommunication, when visual recognition finds its way into transportation. Ethical puzzles arise when technology enters into a domain and, as a result, into our lives. To address ethical puzzles in innovation, the PiE Model integrates ethics analyses and solutions into every stage of innovation.

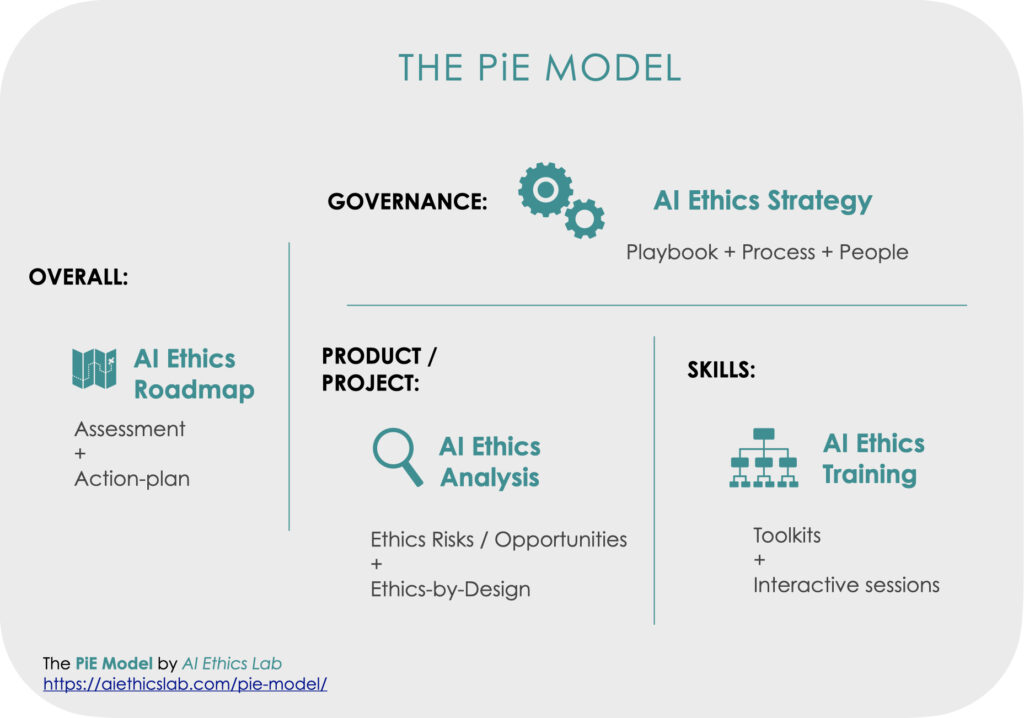

Specifically, we make the PiE MODEL a part of the innovation process by providing:

-

- AI ETHICS ROADMAP to assess the organization and design an action-plan for its ethical needs and readiness;

- AI ETHICS STRATEGY to define operating procedures and to set a governance structure for recurrent complex ethical issues by creating organizational guidelines, processes, and structures;

- AI ETHICS ANALYSIS to detect the ethical puzzles of a product or a project and find solutions for them; and

- AI ETHICS TRAINING to equip creators, deployers, and managers of technology with tools and skills to become “first-responders” to navigate ethical issues.

Watch the Lab’s director, Cansu Canca, Ph.D.‘s GovLab Module on the PiE Model.

▶︎

Who needs the PiE Model?

The simple answer: Everyone who is engaged in development or deployment of AI technologies.

If you are creating and developing AI technologies, if you are purchasing and deploying AI technologies, if you are managing companies that develop and/or deploy AI technologies, you have to understand the ethical landscape that you are standing on. What are the risks and benefits of these technologies? Who are affected by them and how? Whose lives can be better with these technologies? How should you engage with these technologies so that you use them to create value? Without asking these and other core questions, the promising landscape of technology can turn into an ethical minefield.

We respect technology experts and our goal is to help them create better innovations. The PiE Model combines our expertise to solve ethical puzzles with the creators’ and leaders’ expertise in innovation. And by doing so, we enhance technology for the benefit of all.

Read our Forbes AI article, “A New Model for AI Ethics in R&D”, for more information on our model.

Watch the Lab’s director, Cansu Canca, Ph.D., present our model at Harvard Business School Digital Initiative, Digital Transformation Summit.

(Featured image with changes: fdecomite on Visual hunt)