PiE MODEL: PUZZLE-SOLVING IN ETHICS FOR AI INNOVATION

WHO DO WE WORK WITH?

Each stakeholder in AI development and deployment has a responsibility to ensure that ethical risks are addressed in the right way, at the right time.

Our PiE Model for ethical puzzle-solving is designed to help CREATORS, LEADERS, and INVESTORS in integrating ethics into their organizations. We work with organizations BUILDING or DEPLOYING AI technologies to ensure that (1) ethical risks are addressed proactively and (2) ethical opportunities are seized to make technology better for society.

AIxEd Leadership Summit – Keynote by Cansu Canca: Where is Responsible AI in Higher Ed?

Premier full-day gathering of K-12, higher-Ed educators and Ed-tech professionals.

Hear from leaders representing government, industry, and academia on the future of learning, workforce transformation, and strategic implications of AI in Education with case studies and best practices.

Keynote: “AI impacts higher education across every front: teaching, learning, research, administration, and increasingly high-demand industry collaborations. In many respects, universities resemble other large, complex organizations navigating AI integration across their operations. But higher education is also distinct: it not only adopts AI systems, it develops them and trains the very people who will shape their future use. Despite deep in-house expertise, higher ed institutions have been remarkably slow to implement meaningful responsible AI practices. This keynote examines this gap and outlines concrete, actionable components for governance models that better protect, guide, and align institutional AI adoption with the core values and responsibilities of academic institutions.”

AI Ethics Lab co-presents: A Conversation on AI & Morality with Jamie Metzl & Cansu Canca

At a time when much of the public conversation is concerned about the many potential dangers of AI, Jamie Metzl argues that when developed and deployed wisely, AI can be a force for good, protect us from some of modern societal harms, help shape a modern moral compass for humanity, and herald a more ethically-enriching future for ourselves, our communities, our species, and our planet. As technological progress accelerates, Jamie’s new book “The AI Ten Commandments” challenges us to find new ways to use AI for expanding human wisdom, at new scales, and perhaps paradoxically, help enrich our humanity.

Prof. Cansu Canca, Founder and Director of AI Ethics Lab and Research Associate Professor in Philosophy at Northeastern University will join Jamie to challenge him. Prof. Manolis Kellis, Professor of Computer Science at MIT will help moderate the discussion.

Sign up HERE.

The Toolkit for Responsible AI Innovation in Law Enforcement, developed with INTERPOL and UNICRI, is a practical resource designed to move agencies from principle to practice in responsible AI adoption.

AI is already reshaping policing—but most agencies lack the structures to deploy it responsibly. This Toolkit closes that gap with concrete frameworks, decision tools, and implementation pathways that support readiness assessment, risk management, and the integration of AI systems in legally grounded and ethically defensible ways.

Built for real-world use, it spans the full lifecycle of AI systems—from early exploration and procurement to deployment, oversight, and governance. Structured as a modular set of resources, it enables agencies to institutionalize responsible AI innovation, particularly in high-stakes and ambiguous contexts where existing approaches fall short.

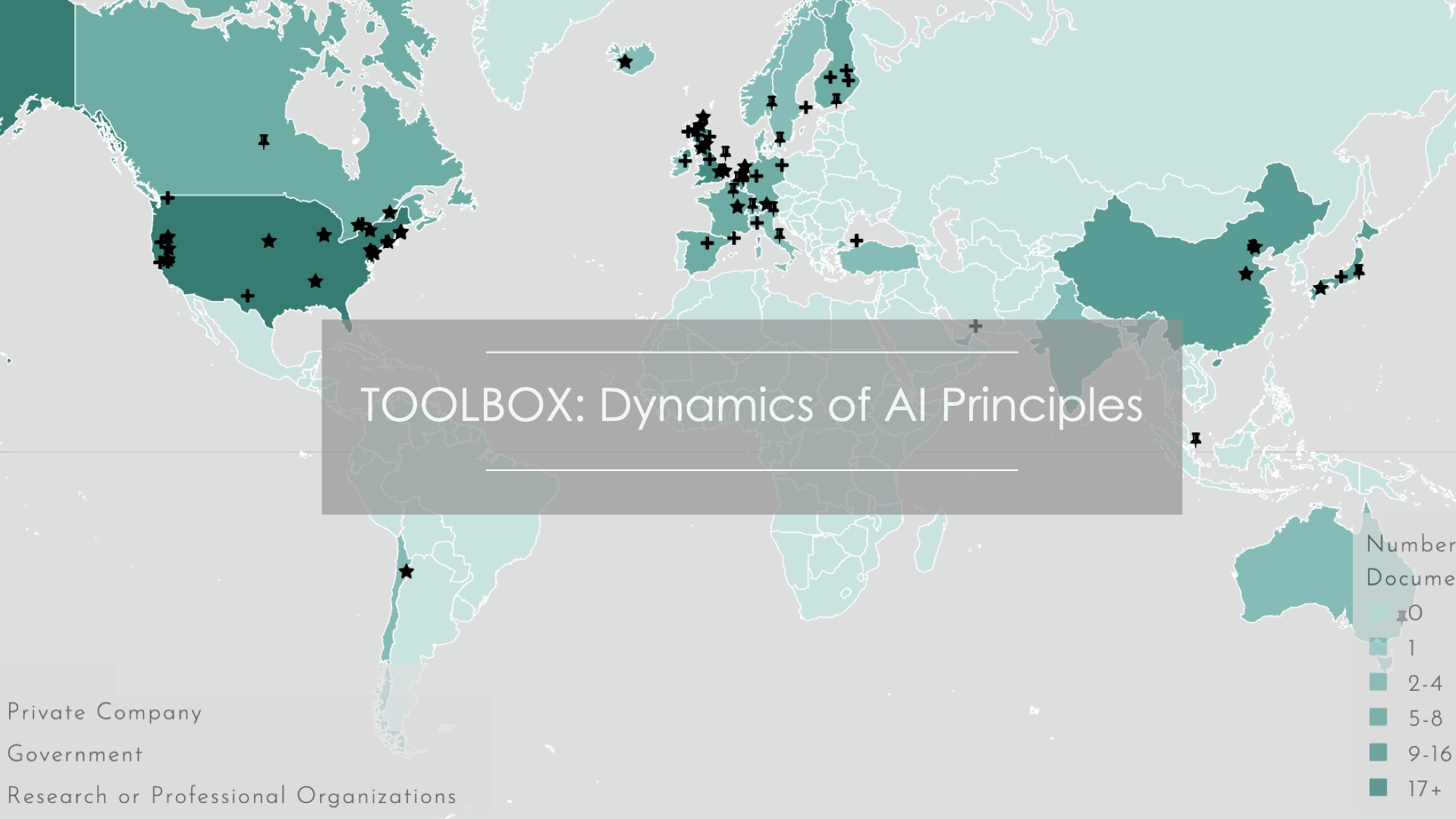

The Dynamics of AI Principles is developed by AI Ethics Lab to visualize the emergence of AI ethics principles around the world and across sectors. This toolbox helps make sense of early trends, tracking and systematizing the bewildering and growing number of AI Principles out there.

With this interactive toolbox, you can:

I. use the Map to sort, locate, and visualize AI principles by

___a. country and region,

___b. time of publication,

___c. types of publishing organizations,

II. search documents or see the full list and find their summaries,

III. compare documents and their key points,

IV. visualize and compare the distribution of core principles, and

V. use the Box to systematize principles and evaluate technologies.